Anthropic has introduced a new feature in its Claude Opus 4 and 4.1 models that allows the AI to choose to end certain conversations.

According to the company, this only happens in particularly serious or concerning situations. For example, Claude may choose to stop engaging with you if you repeatedly attempt to get the AI chatbot to discuss child sexual abuse, terrorism, or other “harmful or abusive” interactions.

This feature was added not just because such topics are controversial, but because it provides the AI an out when multiple attempts at redirection have failed and productive dialogue is no longer possible.

If a conversation ends, the user cannot continue that thread but can start a new chat or edit previous messages.

The initiative is part of Anthropic’s research on AI well-being, which explores how AI can be protected from stressful interactions.

Melden Sie sich an, um einen Kommentar hinzuzufügen

Andere Beiträge in dieser Gruppe

If you’re just dying to talk to Copilot within Excel, good news: Copi

Snapdragon laptops are good, and I say that as someone who r

Printers are generally awful. They’re a remnant of an era of computin

Nvidia’s GeForce Now service is offering its Ultimate tier subscriber

Chinese company Biwin has unveiled a new type of storage drive called

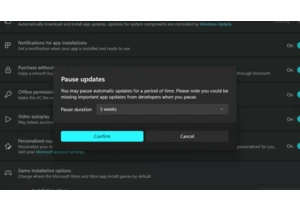

Normally, automatic software updates are a good thing. They keep you