Ben and Ryan are joined by Robin Gupta for a conversation about benchmarking and testing AI systems. They talk through the lack of trust and confidence in AI, the inherent challenges of nondeterministic systems, the role of human verification, and whether we can (or should) expect an AI to be reliable. https://stackoverflow.blog/2024/05/24/would-you-board-a-plane-safety-tested-by-genai/

Login to add comment

Other posts in this group

Ryan welcomes Evan You, the creator of Vue.js, to explore the origins of Vue.js, the challenges faced during its development, and the project’s growth over a decade. They dive into potential integrati

Wenjing Zhang, VP of Engineering, and Caleb Johnson, Principal Engineer at LinkedIn, sit down with Ryan to discuss how semantic search and AI have transformed LinkedIn’s job search feature. They explo

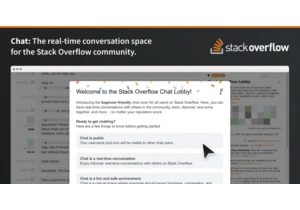

Improving the place where developers have real conversations and real collaboration https://stackoverflow.blog/2025/08/11/renewing-chat-on-stack-overflow/

Ryan welcomes Paul Everitt, developer advocate at JetBrains and an early adopter of Python, to discuss the history, growth, and future of Python. They cover Python’s pivotal moments and rise alongside

In the age of AI, being able to make applications and create code has never been easier. But is it any good? Here's what vibe coding is like for someone without technical skills. https://stackoverflo

Quinn Slack, CEO and co-founder of Sourcegraph, joins the show to dive into the implications of AI coding tools on the software engineering lifecycle. They explore how AI tools are transforming the wo

Innovation is at the heart of any successful, growing company, and often that culture begins with an engaged, interconnected organization. https://stackoverflow.blog/2025/08/04/cross-pollination-as-a