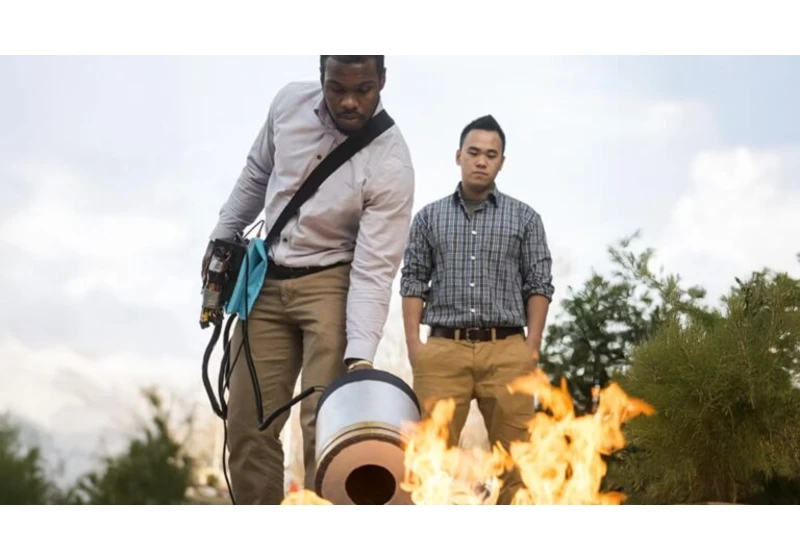

Article URL: https://wowparrot.com/using-sound-waves-to-put-out-fire/

Comments URL: https://news.ycombinator.com/item?id=44530807

Points: 8

# Comments: 2

Article URL: https://patternproject.substack.com/p/from-the-mac-to-the-mystical-bill

Comments URL: https://news.ycombinator.com/item?id=44530767

Points: 87

# Comments: 30

https://patternproject.substack.com/p/from-the-mac-to-the-mystical-bill

Article URL: https://apnews.com/article/ftc-click-to-cancel-30db2be07fdcb8aefd0d4835abdb116a

Comments URL: https://news.ycombinator.com/item?id=44531561

Points: 15

# Comments: 10

https://apnews.com/article/ftc-click-to-cancel-30db2be07fdcb8aefd0d4835abdb116a

Article URL: https://www.nytimes.com/2025/07/10/world/europe/uk-post-office-scandal-report.html

Comments URL: https://news.ycombinator.com/item?id=44531120

Points: 123

# Comments: 64

https://www.nytimes.com/2025/07/10/world/europe/uk-post-office-scandal-report.html

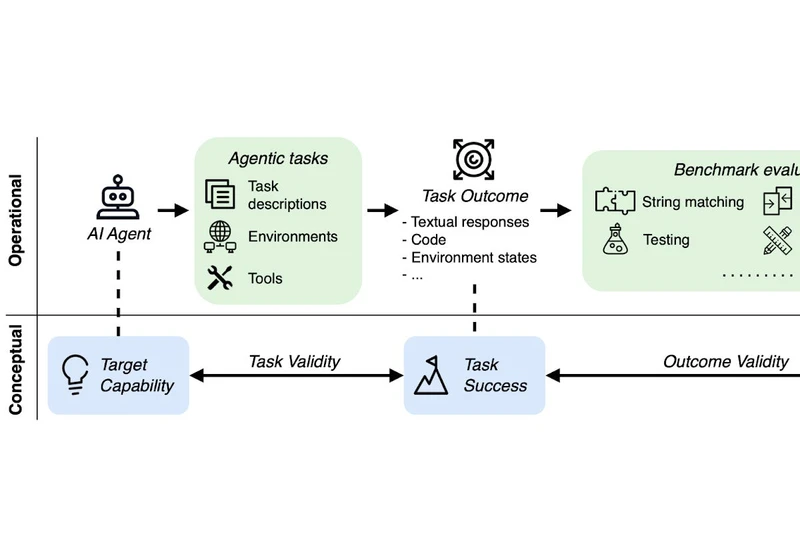

Article URL: https://ddkang.substack.com/p/ai-agent-benchmarks-are-broken

Comments URL: https://news.ycombinator.com/item?id=44531697

Points: 3

# Comments: 0

https://ddkang.substack.com/p/ai-agent-benchmarks-are-broken

Article URL: https://decrypt.co/39750/184-billion-bitcoin-anonymous-creator

Comments URL: https://news.ycombinator.com/item?id=44528509

Points: 4

# Comments: 0

https://decrypt.co/39750/184-billion-bitcoin-anonymous-creator

Hey HN, Henry and Roman here - we've been building a cross-platform framework for deploying LLMs, VLMs, Embedding Models and TTS models locally on smartphones.

Ollama enables deploying LLMs models locally on laptops and edge severs, Cactus enables deploying on phones. Deploying directly on phones facilitates building AI apps and agents capable of phone use without breaking privacy, supports real-time inference with no latency, we have seen personalised RAG pipelines for users and more.

A

Article URL: https://openfront.io/

Comments URL: https://news.ycombinator.com/item?id=44528943

Points: 33

# Comments: 5