We wrote our inference engine on Rust, it is faster than llama cpp in all of the use cases. Your feedback is very welcomed. Written from scratch with idea that you can add support of any kernel and platform.

Comments URL: https://news.ycombinator.com/item?id=44570048

Points: 72

# Comments: 23

Erstellt

7h

|

15.07.2025, 16:50:31

Melden Sie sich an, um einen Kommentar hinzuzufügen

Andere Beiträge in dieser Gruppe

Article URL: https://underwriting-superintelligence.com/

Comments URL: http

Article URL: https://github.com/hazelgrove/hazel

Comments URL: https://news.ycombin

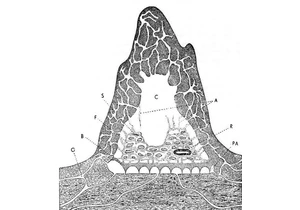

Article URL: https://aethermug.com/posts/human-stigmergy

Comments URL: http

Article URL: https://cartesia.ai/blog/hierarchical-modeling

Article URL: https://calv.info/openai-reflections

Comments URL: https://news.ycomb